Modern Web Development - Part 6

Modern Web Development - Part 6

This is the sixth of ten parts of this blog post. The topics will be:

- 1: A New World

- 2: Architecting JavaScript

- 3: A Better CSS

- 4: Debugging

- 5: Joy and Pain of jQuery Plugins

- 6: Packaging Assets (this article)

- 7: Distributed Version Control

- 8: Working with Facebook

- 9: Mobile Pages

- 10: Deploying to the Cloud (upcoming)

The Problem

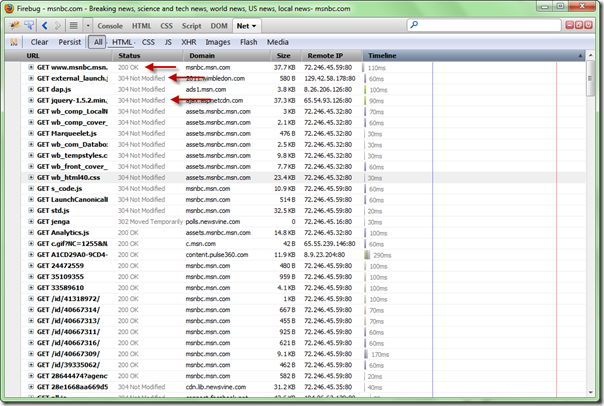

As you develop HTML apps, one of the issues you’ll face is that your application doesn’t come to the browser in one fell-swoop. A typical web page receives content from a number of sources. Below you can see the first bun of requests from a site (in this case MSNBC.com) as shown in Firebug:

While reducing this number and size of these requests is laudable, you will also want to take browser cache into account. In the image above, you can see that some of the assets (e.g. jquery-1.5.2.min.js) returned a status of “304 Not Modified”. This status implies that the browser found the latest version of this asset in it’s cache and didn’t need to download a new one (as it hasn’t change…or was “NOT MODIFIED” from it’s current version).

For me, this meant that I wanted two things from packaging of assets:

- Reduce the size and number of downloads whenever possible

- Let the browser cache do it’s job

What Do I Mean by Packaging?

Part of the work involved in building a web project is taking all those assets (JavaScript, style sheets and even images) and making it efficient for your pages to use. In the case of JavaScript and style sheets, you will want to both compress (i.e. minify) as well as batch (combine multiple files). So let’s talk about them separately.

Packaging Style Sheets

While most solutions for packaging assets take style sheets into account, for me I decided that the dynamic style sheet languages (LESS and SASS) do this adequately. (If you haven’t read my post on using dynamic style sheet languages, see it here.)

While using the @import declaration will merge style sheets, you may also want to minify the style sheets too. In the case of dotless (which I am using for delivering my LESS files), you can use the configuration to turn on minimizing of the style sheets:

<dotless minifyCss="false"

cache="true"

web="false" />

The minifyCss property can be turned on (usually in my Web.Release.Config file) to make the output of your LESS files to be minimized to decrease their size.

Packaging JavaScript

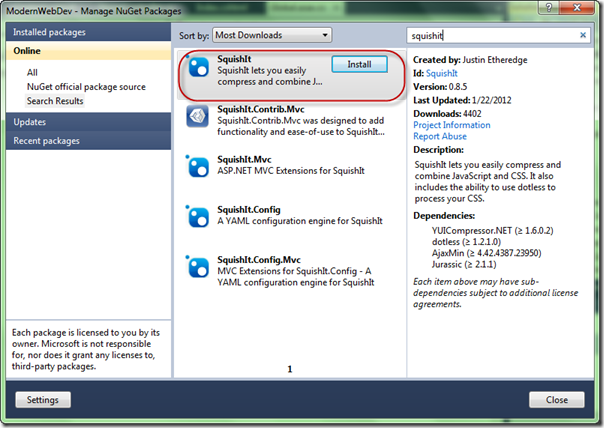

To help with the merging and minimizing of my JavaScript, I use a great library from Justin Etheredge (@JustinEtheredge) called SquishIt (though I won’t discuss the importance of the capital letters). This library uses commonly used open source projects to do the minimizing and merging but packages it so you can call it from ASP.NET code easily. To start off with SquishIt, I would suggest just adding it to your project via Nuget:

If you read the docs for SquishIt, Justin shows it used directly in your Razor code like so:

<!-- _Layout.cshtml -->

@using SquishIt.Framework

<!DOCTYPE html>

<html>

<head>

...

</head>

<body>

@RenderBody()

</body>

</html>

@Bundle.JavaScript()

.Add("~/scripts/jquery-1.7.1.min.js")

.Add("~/scripts/jquery-ui-1.8.17.min.js")

.Render("~/scripts/combined.js")

```csharp

The idea of how this works is that you add individual scripts (or whole directories) into a bundle of related scripts. When Render is called, it determines whether to package up all the scripts into a single script (name combined.js in this case) or to leave them as separate scripts.

I find managing this kind of code in the markup makes it harder for me to maintain the code so I decided to do it as extension methods (of HtmlHelper):

```csharp

public static class HelpHelpersExtensions

{

public static MvcHtmlString PackageLibs(this HtmlHelper htmlHelper)

{

var client = Bundle.JavaScript()

.Add("~/scripts/jquery-1.7.1.min.js")

.Add("~/scripts/jquery-ui-1.8.17.min.js")

.Render("~/scripts/combined.js");

return new MvcHtmlString(client);

}

}

To get this into my razor files, I simply just call the HtmlHelper extension method:

<!-- _Layout.cshtml -->

<!DOCTYPE html>

<html>

<head>

...

</head>

<body>

@RenderBody()

</body>

</html>

@Html.PackageLibs()

```csharp

This works fine…except that I am packaging the minimized versions of the libraries in all cases. This will make debugging more difficult, so it would be better if I could do this differently in debug or release builds. Easy:

```csharp

public static MvcHtmlString PackageLibs(this HtmlHelper htmlHelper)

{

var client = Bundle.JavaScript()

#if DEBUG

.Add("~/scripts/jquery-1.7.1.js")

.Add("~/scripts/jquery-ui-1.8.17.js")

#else

.Add("~/scripts/jquery-1.7.1.min.js")

.Add("~/scripts/jquery-ui-1.8.17.min.js")

#endif

.Render("~/scripts/combined.js");

return new MvcHtmlString(client);

}

```csharp

This is better as we’re not using the minimized versions, but in release mode, I don’t want to use my local versions, but I want to rely on content delivery for very common scripts (mostly jQuery and jQuery UI). SquishIt let’s me do this by adding a CDN link (with the local link as a backup) using the AddRemote method:

```csharp

public static MvcHtmlString PackageLibs(this HtmlHelper htmlHelper)

{

var client = Bundle.JavaScript()

#if DEBUG

.Add("~/scripts/jquery-1.7.1.js", )

.Add("~/scripts/jquery-ui-1.8.17.js")

#else

.AddRemote("~/scripts/jquery-1.7.1.min.js",

"http://ajax.googleapis.com/ajax/libs/jquery/1.7.1/jquery.min.js")

.AddRemote("~/scripts/jquery-ui-1.8.17.min.js",

"http://ajax.googleapis.com/ajax/libs/jqueryui/1.8.17/jquery-ui.min.js")

#endif

.Render("~/scripts/combined.js");

return new MvcHtmlString(client);

}

Pretty clean so far. And as I use plugins, I’ll just add them here so all my plugins work. But one issue for me is that the Render method uses the debug flag in the web.config to determine whether it merges all the scripts into a single file. For my needs, I want the libraries to *always* be separate. To accomplish this the SquishIt framework allows you to call **ForceDebug **before Render:

public static MvcHtmlString PackageLibs(this HtmlHelper htmlHelper)

{

var client = Bundle.JavaScript()

#if DEBUG

.Add("~/scripts/jquery-1.7.1.js", )

.Add("~/scripts/jquery-ui-1.8.17.js")

#else

.AddRemote("~/scripts/jquery-1.7.1.min.js",

"http://ajax.googleapis.com/ajax/libs/jquery/1.7.1/jquery.min.js")

.AddRemote("~/scripts/jquery-ui-1.8.17.min.js",

"http://ajax.googleapis.com/ajax/libs/jqueryui/1.8.17/jquery-ui.min.js")

#endif

.ForceDebug()

.Render("~/scripts/combined.js");

return new MvcHtmlString(client);

}

The way the works is crucial for me as I want to keep my library scripts (e.g. jQuery, jQuery UI and plugins) separate so I can gain the benefit of browser cache as much as possible. When I create a bundle of my own scripts, I go ahead and let it bundle it into a single file. In fact, for my own scripts, instead of adding the scripts one by one, I add all the scripts in the js directory:

public static MvcHtmlString PackageScripts(this HtmlHelper htmlHelper)

{

// Get a list of the scripts

var path = HttpContext.Current.Server.MapPath("~/js/");

var scripts = Directory.EnumerateFiles(path, "*.js", SearchOption.TopDirectoryOnly);

var scriptPaths = new List<string>();

foreach (var script in scripts)

{

var scriptName = Path.GetFileName(script);

// Only add the scripts that aren't generated

if (!scriptName.StartsWith("ModernWebDev"))

{

var filename = string.Concat("/js/", Path.GetFileName(script));

scriptPaths.Add(filename);

}

}

var client = Bundle.JavaScript()

.Add(scriptPaths.ToArray())

#if DEBUG

.ForceDebug()

#else

.WithMinifier<MsMinifier>()

.ForceRelease()

#endif

.Render("~/js/ModernWebDev_#.js");

return new MvcHtmlString(client);

}

```csharp

The first section of method is just using System.IO to search through the directory to find all the JavaScript files to include. There are a couple of new wrinkles here near the bottom of the method though.

First, the **WithMinifier** method allows you to specify which minifier to use (I’ve had better luck with the Microsoft minimizer but you can use whatever one you want). In the **Render **method I’ve also included a pound sign (#) in the path to the merged JavaScript file. What this does is tell SquishIt to generate a unique number (I believe based on a hash of the current build) so that the generated file is versioned and as new builds are created, the new version is guaranteed to download instead from browser cache to prevent accidental old versions of code.

Be aware, that using the merging (e.g. ForceRelease) of the scripts will actually generate the ModernWebDev\_#.js file in the file system. Because the code is building a list of scripts dynamically, we need to ensure that that file isn’t included in our list of scripts (as it would then become recursive and the same methods will be defined).

You may notice that since I am building all my scripts into one file, that means that all scripts will be loaded on the first and all subsequent pages. There isn’t a wrong and right here. I made the decision that loading all of it and keeping it in browser cache was easier and faster than segmenting it. But my site isn’t enormous and when you start working with very large applications you will find the need to break it up in to modules (e.g. multiple different merged scripts, probably along directories is how I’d do it).

**What about ASP.NET 4’s Web Optimization Stuff?**

As you may know, Microsoft (as of the writing of this article) has just released the Beta of ASP.NET MVC 4 and that includes a new stack for optimizing assets called **System.Web.Optimization**. There is an article that covers the basics (though it’s a little out of date) by Scott Guthrie:

[http://shawnw.me/xuPcoo](http://shawnw.me/xuPcoo "http://shawnw.me/xuPcoo ")

I considered moving to this as it’s pretty slick and pluggable, but it was missing some key features for me:

- Packaging of groups of assets but not including them separately (the Libraries packaging is an example of this).

- No support for CDN delivery of libraries.

- Wasn’t a clear way to not minify in debug versus release mode or provide different versions for those modes.

Because of these issues, I am sticking with SquishIt (and to follow the adage that says “old code is good code”). I am sure it will improve (either by people writing plugins to it or MS fixing it) to address these issues. For many projects that don’t care about some of the control I needed, it is a great solution.

**In Action**

You can see it work in action with the latest version of this example:

[http://wilderminds.blob.core.windows.net/downloads/modernwebdev\_6.zip](http://wilderminds.blob.core.windows.net/downloads/modernwebdev_6.zip)